Vibe Coding The Holidays Away

A data-driven breakdown of what attackers are actually hunting for on the open internet — and what defenders should be watching.

Over the holiday break, I deployed a set of web honeypots on Digital Ocean and let them soak. No fancy banners, no fake login portals — just nginx instances logging every request to Loki, with a daily analysis pipeline crunching the data into structured threat models. The honeypots ran from January 1–16, 2026, collecting 71,768 total requests from ~400+ unique IPs per day across tens of thousands of unique URI paths.

Over the holidays I had some free time and decided to sit down and build out a project I had been kicking around for quite some time. I wanted to see if I couldn’t build a honeypot network across global AWS infrastructure, forward the goals to a single destination (my aggregator), and then analyze those logs to see if there were any noteworthy trends.

What came back isn’t groundbreaking in the “zero-day” sense. But it paints one of the clearest pictures I’ve seen of what the automated internet actually looks like when it hits your infrastructure — and more importantly, what it’s looking for. If you’re building hunt hypotheses, tuning detections, or trying to prioritize hardening, this is the kind of ground truth that matters.

Here’s what the data said.

The Shape of the Noise

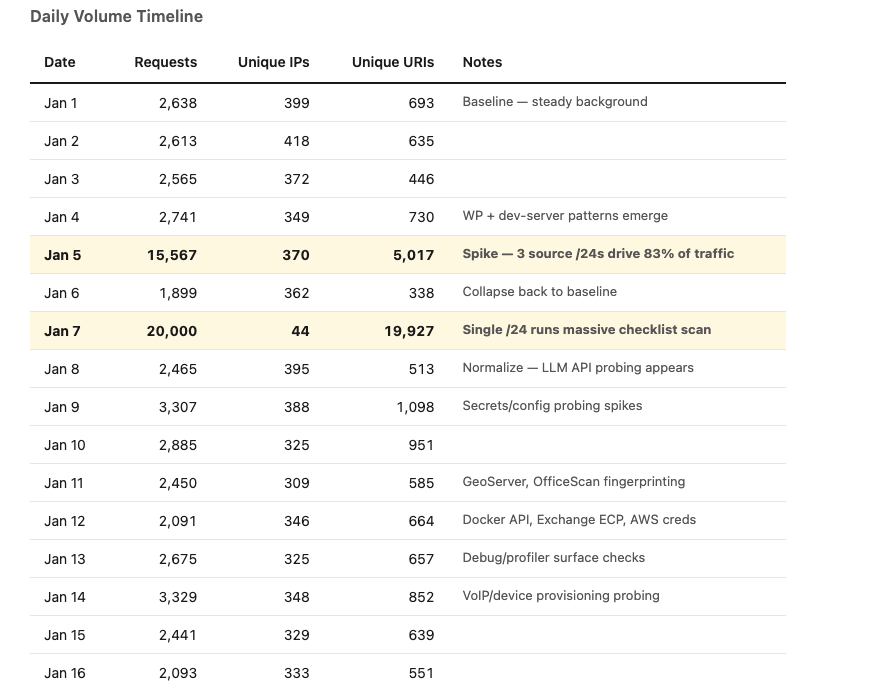

Before diving into what was targeted, let’s look at the volume and rhythm.

Two things jump out immediately:

The baseline is remarkably consistent. On quiet days, you’re looking at ~2,500 requests from 300–400 unique IPs. This is the internet’s ambient scanning noise, distributed across many sources running standard checklists. Your average exposed service gets probed roughly this much every single day whether you notice or not.

The spikes tell a different story. January 5th saw a 6x volume surge driven by just three AWS-based /24 networks (13.59.55.0/24, 54.234.91.0/24, 54.175.183.0/24) running what appears to be coordinated broad enumeration. January 7th was even more dramatic — a single /24 (144.91.101.0/24) fired off 19,873 requests in 24 hours, cycling through nearly 20,000 unique URI paths. That’s one scanner working through a massive filename permutation list of backup files, config artifacts, and secret files across /api, /admin, /core, and /backup prefixes.

The contrast matters: Jan 7 had only 44 unique IPs but 20,000 unique URIs. A typical day has 350+ IPs but only 500–700 URIs. The spike wasn’t a DDoS — it was a single, methodical scanner running a very large dictionary. And honestly that’s just what the data showed because I hadn’t expected to get that many hits and logs were truncated to 20,000 lines.

What They’re Actually Hunting For

Here’s where it gets actionable. Across 16 days, I classified every URI into families based on what technology or misconfiguration it targets. The top 10 families by total volume:

1. Environment Files & Secrets — 12,365 hits (every single day)

Paths: /.env, /config.zip, /app/.env, /.env.ts, /api/env.zip, /.aws/credentials, /assets/credentials.json

This was the single most persistent category across the entire observation window. Every single day, scanners checked for exposed dotenv files, config archives, and cloud credential artifacts. The Jan 7 spike included 8,438 env-family requests alone — a massive run through .env path permutations.

The scanner toolkits aren’t just checking /.env anymore. Isaw probes for .env.ts, .env.production, env.zip, and even /.aws/credentials and /.aws/config. They’re adapting to modern deployment patterns.

Hunt angle: If your org deploys to cloud infrastructure, check your external attack surface for any 200 responses to dotenv paths. One exposed .env file is a full credential compromise. Also worth hunting for any CI/CD pipelines that might accidentally publish these to web roots.

2. Application Files & PHP Supply Chain — 10,903 hits (15 of 16 days)

Paths: /vendor/phpunit/phpunit/src/util/php/eval-stdin.php, /vendor/phpunit/phpunit/util/php/eval-stdin.php

Legacy PHP supply chain exploitation is alive and well. The phpunit eval-stdin.php path — a file that shouldn’t exist in production but does when vendor directories are accidentally web-accessible — was one of the most consistently probed targets. Multiple path variants are checked simultaneously, suggesting scanner dictionaries include known path permutations across different phpunit versions.

Beyond phpunit, this family includes broad .php, .asp, .aspx, .jsp probing, effectively fingerprinting what server-side technologies are present.

Hunt angle: Check for web-accessible /vendor/ directories in any PHP deployments. If phpunit eval-stdin is reachable, you have RCE. Also audit your build pipelines — do they strip vendor/test directories from production artifacts?

3. Backup File & Config Artifact Enumeration — 6,500+ hits (concentrated spikes)

Paths: /api/error.bak, /admin/backup/database.bak, /core/backup/database.conf, /backup/database.cfg

The January 7th scanner drove this almost entirely. It systematically checked /api, /admin, /core, and /backup prefixes combined with backup extensions (.bak, .cfg, .conf, .old, .save, .sql). The approach was pure permutation — take common directory prefixes, combine with common sensitive filenames, append every backup extension in the book.

This is a numbers game. Across thousands of targets, even a tiny percentage of accidentally published database backups or config files yields immediate credential access.

Hunt angle: Scan your own web roots for backup extension files. Any .bak, .sql, .old, .cfg file accessible via HTTP is a finding. Add deny rules at your web server/WAF layer for these extensions globally.

4. Login & Authentication Surface Discovery — 2,262 hits (10 days)

Paths: /login, /api/login, /signin, /auth, /core/skin/login.aspx, /owa/auth/logon.aspx

Scanners are cataloging what authentication endpoints exist — not (yet) brute-forcing them, but mapping the surface. Isaw probes for generic login paths alongside specific product surfaces: ASP.NET login forms, Outlook Web Access, and API authentication endpoints.

Hunt angle: This is reconnaissance. If these endpoints exist in your environment, ensure MFA is enforced, rate limiting is in place, and you’re monitoring for the credential stuffing that follows discovery.

5. Git Repository Exposure — 686 hits (13 of 16 days)

Paths: /.git/config, /.git/index, /.git/info/refs

Persistent, steady, and high-impact. Git exposure was checked almost every day with above-baseline emphasis. If /.git/config returns 200, an attacker can reconstruct your entire source code repository, including hardcoded secrets, internal API endpoints, and deployment configurations.

Hunt angle: This is one of the easiest wins for external attack surface validation. Test your own internet-facing services for /.git/config access. Block all dotfile directories at the web server level.

6. WordPress — 551 hits (7 days)

Paths: /wp-config.php.bak, /wp-content/w3tc-config/master-preview.php, /xmlrpc.php, /wp-admin, /wp-login.php

WordPress scanning came in waves, not constantly. When it appeared, it focused on configuration file backups (wp-config.php.bak, wp-config.php.old) and known vulnerable plugin paths rather than just login brute-forcing.

7. Dev-Server / Vite File Read (/@fs/) — 350 hits (3 days, sharp spikes)

Paths: /@fs/etc/passwd?import=, /@fs/.docker.env?import=, /@fs/proc/self/environ?raw??=

This was one of the more interesting findings. The /@fs/ pattern targets Vite dev server file-read vulnerabilities (CVE-2023-34092 and related). When a Vite dev server is accidentally exposed to the internet, the /@fs/ prefix can read arbitrary files from the host filesystem.

The scanners were specifically targeting /etc/passwd, .docker.env, and /proc/self/environ through this path — all high-value for credential harvesting or container escape.

Hunt angle: This is a great detection engineering target. Any production system responding to /@fs/ requests has a dev server exposed. Hunt for Vite or similar dev servers bound to 0.0.0.0 in production environments. The ?import= query parameter is highly specific and makes a clean detection signature.

8. Citrix Gateway (/+cscoe+/, /+cscol+/) — 201 hits (6 days)

Paths: /+cscoe+/logon.html, /+cscoe+/logon_forms.js, /+cscol+/java.jar

Perimeter device fingerprinting for Cisco/Citrix gateways. Consistent low-volume probing to identify if these appliances exist and are reachable. Once fingerprinted, follow-on exploitation of known CVEs is the playbook.

9. AI/LLM API Endpoint Discovery — 140 hits (appeared Jan 8)

Paths: /v1/messages, /v1/chat/completions, /openai/v1/chat/completions, /openai/deployments/gpt-4/chat/completions?api-version=2024-02-15-preview

This one caught my attention. Starting January 8th, Iobserved scanners probing for exposed LLM API endpoints — checking for OpenAI-compatible and Anthropic-style API paths. The requests targeted both generic paths (/v1/chat/completions) and Azure OpenAI deployment-specific paths with version parameters.

This is a relatively new addition to scanner dictionaries. Exposed LLM API proxies represent a direct financial risk (token theft/abuse) and a potential data exfiltration vector if the API has access to internal knowledge bases or RAG systems.

Hunt angle: Search your environment for any services exposing /v1/chat/completions or /v1/messages to the internet without authentication. If you run LLM inference proxies, API gateways, or development endpoints, confirm they’re not accidentally internet-facing. This is a fresh hunting target that most orgs probably aren’t monitoring for yet.

10. Management Surfaces — Steady Background

Across the 16 days, Ialso saw consistent probing for:

Spring Boot Actuator (

/actuator,/actuator/gateway/routes,/env,/health) — 118 hits across 6 daysDocker Remote API (

/containers/json) — 57 hits across 5 daysTomcat Manager (

/manager/text/list,/manager/html) — 45 hits across 5 daysGeoServer (

/geoserver/web) — 29 hits across 2 daysTrend Micro OfficeScan (

/officescan/console/cgi/cgichkmasterpwd.exe) — appeared once

Each of these individually is low volume. Collectively, they represent scanners maintaining checklists of management interfaces that, when exposed, provide immediate administrative access or sensitive configuration disclosure.

The Scanner Ecosystem

Source Concentration

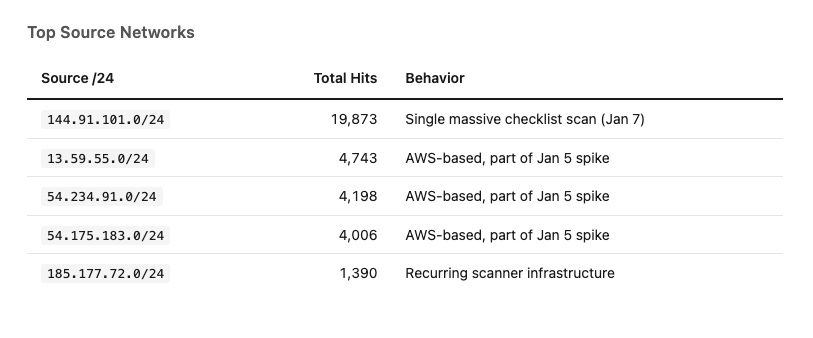

One of the clearest patterns was how source-concentrated the traffic was. The top 5 source networks accounted for the vast majority of requests:

The Jan 5 spike was three AWS /24s working together — likely a single operator using EC2 instances for distributed scanning. The Jan 7 spike was a single Contabo-hosted /24 running a much larger, more comprehensive dictionary against fewer targets.

Background noise comes from a rotating cast of 300–400 IPs per day, mostly running shorter, more focused checklists. The heavy hitters are episodic and concentrated.

Scanner Dictionary Evolution

Day-over-day, Itracked which URI families appeared as “new” — never seen before in the observation window. The pattern suggests scanner operators are actively refreshing their dictionaries:

Jan 1: Tomcat Manager,

.well-known/security.txtJan 4: WordPress probing increase,

/@fs/dev-server pattern appearsJan 5: Broad enumeration surge with

/@fs/and secrets emphasisJan 7: Massive backup/config permutation sweep (

/api,/admin,/core,/backup)Jan 8: AI/LLM API endpoints (

/v1/messages,/openai/*) appear for the first timeJan 11: GeoServer, OfficeScan fingerprinting

Jan 12: Docker API, Exchange ECP export tool, AWS credential files

Jan 14: VoIP/Polycom provisioning config fetches, embedded device login forms (

/boaform/admin/formlogin)Jan 16: Increased Spring Actuator and embedded admin path checks

The LLM endpoint probing starting Jan 8 is particularly notable — it suggests these scanning tools are being updated to reflect the current technology landscape, not just running stale lists from 2020.

Defensive Takeaways

If you’re building hunts or hardening your environment based on this data, here’s where to start:

Quick wins (external attack surface):

Block dotfile access (

/.env,/.git/*,/.aws/*) at the edge. If any of these return 200 from your infrastructure, treat it as a confirmed finding.Deny backup extensions (

.bak,.old,.cfg,.conf,.save,.sql) across all web-accessible paths.Validate that

/vendor/directories are not web-accessible in PHP deployments.

Detection engineering targets:

/@fs/requests with?import=parameter — highly specific Vite dev-server file read indicator./v1/chat/completionsor/v1/messageson unexpected hosts — exposed LLM infrastructure./containers/json— Docker Remote API exposure check./actuator/gateway/routes— Spring Cloud Gateway route disclosure./proc/self/environ— local file read validation attempt.

Hunt hypotheses:

Are any internal dev servers (Vite, webpack-dev-server, Next.js dev mode) accidentally bound to external interfaces?

Do any CI/CD pipelines publish

.env,.git, or vendor test directories to production web roots?Are LLM API proxies, inference endpoints, or development servers exposed without authentication?

Are any Spring Boot services running with Actuator endpoints exposed and unauthenticated?

Methodology Notes

The honeypots were nginx instances forwarding all access logs to a centralized Loki instance via Grafana’s log aggregation stack. A daily Python analysis pipeline pulled the raw logs, classified URIs into technology families using regex-based rules, tracked source IP concentrations at the /24 level (to capture infrastructure patterns), and generated structured threat models with day-over-day delta analysis.

Request counts were capped at 20,000 per 24-hour window and 40,000 per 7-day window in the collection pipeline, meaning actual volumes on spike days (particularly Jan 5 and Jan 7) may have been higher than reported. The 16-day observation window provides a useful snapshot but shouldn’t be treated as a comprehensive survey — regional and temporal variation in scanning patterns is expected.

The raw data and analysis pipeline are available for anyone interested in replicating or extending this work. Reach out if you want to compare notes. Attached is also my github projects with the code so you can deploy this yourself!

https://github.com/eliwoodward/Holiday-Honeypot-Vibecoded/tree/main

Happy thrunting. 🔥

🔥