When to Stop Hunting

The Art of Knowing You’ve Looked Hard Enough

I’ve been doing this long enough to know that starting a hunt is the easy part. You’ve got a hypothesis, you’ve got your data sources queued up, you’ve got that first-cup-of-coffee energy. The hard part? Knowing when you’re done.

Nobody teaches this. I’ve read dozens of hunting guides, sat through countless conference talks, and co-authored an entire framework. We spend so much effort on how to start a hunt, how to form a hypothesis, how to pick your data sources. But the question of when to stop gets a hand wave at best.

And that’s a problem. Because right now, most of us are stopping hunts based on vibes.

The Vibes Problem

Be honest. How do you currently decide a hunt is over?

“It feels like I’ve looked at enough.” “We ran out of time.” “I didn’t find anything, so I guess we’re good?” “My boss asked for the report.”

None of those are termination criteria. Those are circumstances. There’s a difference between stopping a hunt and a hunt being done.

I’ve watched hunters burn through a full week chasing phantom lateral movement because they couldn’t articulate what “done” looked like. I’ve also watched hunters close a hunt in four hours because they ran a few queries, got no hits, and called it. Both are failure modes. One wastes resources. The other creates false confidence.

We need something better than vibes.

Coverage Criteria: Did You Actually Look?

The first question to ask yourself before closing a hunt: did I actually examine all the data sources that matter for this hypothesis?

This sounds obvious but it really isn’t.

One of my first hunts ever, I was hunting PowerShell and I totally missed looking at the Windows script logging events. Partly because I was new, but also because I didn’t document my data sources. There was no list of “here’s what I need to look at.” So I looked at what I knew about and moved on, thinking I’d covered it.

I hadn’t. And I didn’t realize it until way later.

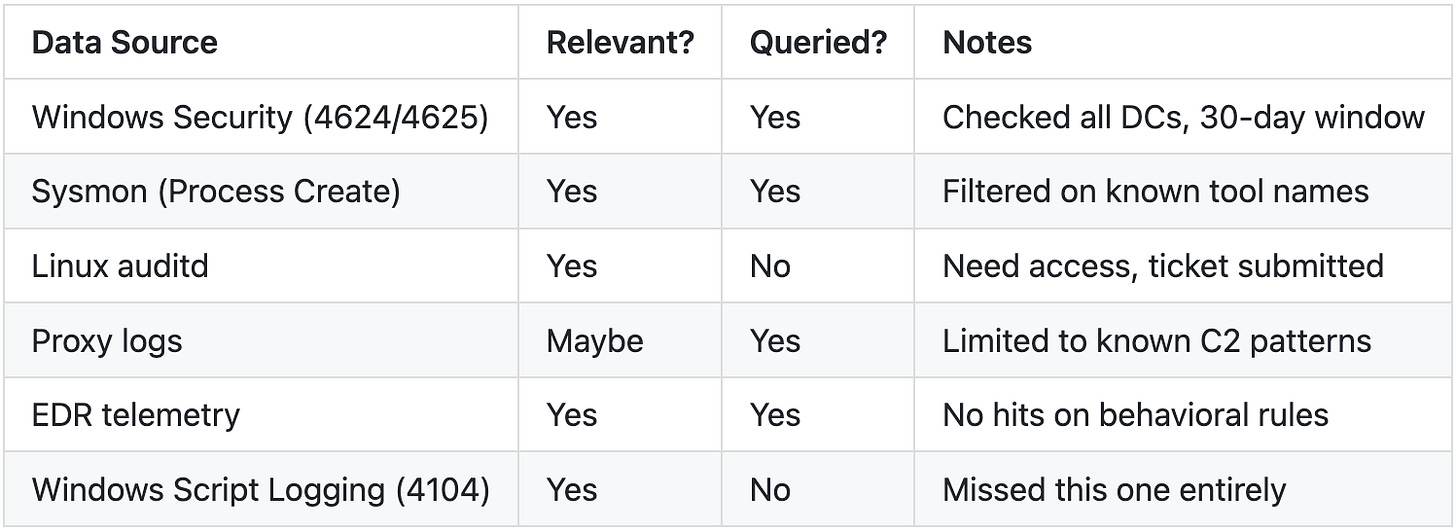

Here’s what you could do. At the start of every hunt, write down every data source that’s relevant to your hypothesis. Not “everything we have,” but the specific sources that could contain evidence of the behavior you’re looking for. Then track which ones you’ve actually queried. Simple as a table in a notebook:

If there’s a “No” in that queried column when I’m thinking about closing the hunt, I’m not done. I either need to go look at it or explicitly document why I couldn’t (access issues, data not available, retention gap) and flag that as a coverage gap in my findings.

Coverage isn’t just data sources either. It’s time windows. If your hypothesis is about an intrusion that could have happened anytime in the last 90 days but your logs only go back 30, that’s a coverage gap. Write it down.

Diminishing Returns: The Same False Positive Three Times

There’s a pattern I see in every hunt that’s gone on too long. You start finding the same things over and over.

The same service account triggering the same alert. The same legacy application doing weird DNS stuff. The same admin using RDP at odd hours because they’re in a different time zone. You’ve already investigated these. You’ve already ruled them out. But you keep bumping into them because your queries are broad enough to catch them.

When this starts happening, pay attention. It’s a signal.

Not a signal that there’s nothing to find. A signal that your current approach has extracted all the value it’s going to. You’ve saturated your search space at this level of granularity.

At this point you have three choices:

Refine your queries to filter out the known noise and look deeper

Shift your approach entirely (different data source, different technique, pivot to a related hypothesis)

Acknowledge you’ve hit the floor and document what you found (including the noise)

Option 3 is valid. I know it feels like quitting. It’s not. It’s recognizing that more time in this direction won’t change the outcome. That’s professional judgment, not laziness.

Time-Boxing vs. Completeness

Every hunting team I’ve worked with has some version of this tension. Leadership wants hunts scoped to a sprint. Two days, a week, whatever fits the roadmap. Meanwhile, the hunter is sitting there thinking “but I haven’t checked the cloud logs yet.”

Here’s my take: time-boxing is necessary but not sufficient.

You need time constraints. Without them, hunts expand forever. I’ve seen it. A two-week hunt becomes a month because the hunter keeps pulling threads. Some of those threads matter. Most don’t. Without a boundary, there’s no forcing function to prioritize.

But a time box alone doesn’t tell you whether you’re done. It tells you when you have to stop. Those aren’t the same thing.

What I recommend: set your time box upfront, but also define your minimum coverage criteria upfront. If you hit the time box before you hit your coverage criteria, you have a decision to make. And that decision should be documented, not just made silently.

“Hunt time-boxed to 3 days. Completed analysis of Windows event logs, Sysmon, and EDR telemetry. Did NOT complete review of cloud audit logs or email gateway logs due to time constraints. Recommend follow-up hunt or including these sources in next cycle.”

That’s a responsible close. Compare that to: “Hunt complete. No findings.” Same outcome, completely different level of honesty about what you actually did.

The Confidence Spectrum

This is the thing I wish every hunter would internalize: “I found nothing” and “I am confident nothing is there” are wildly different statements.

“Found nothing” means your queries didn’t return hits. That’s a fact about your queries, not a fact about your environment.

“Confident nothing is there” means you examined the right data, with sufficient coverage, over the right time period, using techniques appropriate to the threat, and you can explain why the absence of evidence is meaningful.

Most hunts end with the first statement pretending to be the second.

I think about this as a spectrum:

Low confidence: “Ran some queries, no hits.” You looked, but not deeply.

Medium confidence: “Examined primary data sources for indicators consistent with hypothesis. No evidence found, but coverage gaps exist in X and Y.”

High confidence: “Examined all relevant data sources across the full time window. Validated detection logic against known-good simulations. No evidence of the hypothesized behavior. Coverage gaps: none identified.”

Most hunts land somewhere in the medium range. That’s fine. But say so. Don’t let a medium-confidence hunt get reported as high-confidence just because it sounds better in a slide deck.

Documentation as Closure

A hunt is not done until it’s written down.

I don’t care if you found something or not. Null findings are findings. They’re data points that inform future hunts, justify detection investments, and build institutional knowledge about what you’ve looked at and when.

If you close a hunt with no documentation, it’s like it never happened. Six months from now, someone will hunt for the exact same thing because nobody recorded that you already did.

At minimum, your hunt closure document should include:

Hypothesis: What were you looking for and why?

Scope: What environment, data sources, and time window?

Coverage: What did you actually examine? What didn’t you get to?

Findings: What did you find? Include false positives worth noting.

Confidence level: How confident are you in the result?

Recommendations: Detections to build, data gaps to fix, follow-up hunts to schedule.

Time spent: How long did this actually take?

That last one matters more than people think. If you’re tracking time spent per hunt, you start to build a picture of what types of hunts are expensive versus cheap. That data helps you plan better.

I know documentation isn’t sexy. Nobody got into threat hunting to write reports. But documentation is what turns a hunt from an activity into an artifact. Artifacts compound over time in ways that individual hunts don’t.

Where This Fits in PEAK

If you use PEAK (and if you don’t, here’s a template to get started), hunt termination should be baked into the Prepare phase.

PEAK has four phases: Prepare, Execute, Act, and Knowledge. Most people pour their energy into Execute because that’s where the actual hunting happens. But Prepare is where you define what success looks like, and that includes defining what “done” looks like.

When you’re building your hypothesis in Prepare, add termination criteria right next to it:

What data sources must I examine before I can call this complete?

What’s my time box?

What confidence level am I targeting?

What would “good enough” look like if I can’t hit full coverage?

Then, in the Act phase, your closure documentation naturally includes an assessment against those criteria. Did you meet them? If not, why not?

The Knowledge phase is where this really pays off. When you capture termination criteria and coverage assessments alongside your findings, you’re building a body of knowledge about your hunting capability, not just your hunting results. Over time, you can answer questions like “how often do we hit our coverage targets?” and “where are our persistent blind spots?”

That’s the kind of thing that separates hunting programs that stick around from ones that fizzle out after a year.

The Hunt Closure Checklist

OK, I promised something practical. Here’s a checklist your team can steal and adapt.

Before You Close the Hunt

[ ] All scoped data sources have been queried (or gaps documented)

[ ] Queries reviewed for correctness, not just “no hits”

[ ] If nothing found, you can explain why the absence is meaningful

[ ] Findings documented (including null findings)

[ ] Confidence level stated (low / medium / high)

[ ] Follow-up recommendations and detection opportunities logged

[ ] Hunt artifacts (queries, scripts) saved somewhere retrievable

Red Flags That You’re Stopping Too Early

You haven’t looked at all the data sources you scoped

Your queries are too narrow (you’d miss variants of the behavior)

You found something interesting but didn’t follow up because time ran out

You’re closing the hunt to hit a metric, not because you’re done

Red Flags That You’ve Gone Too Long

You keep finding the same false positives and re-investigating them

You’re expanding scope beyond the original hypothesis without a clear reason

You’ve shifted from “hunting” to “exploring” with no specific goal

The hunt has consumed more than 2x its original time box

Closing Thoughts

Knowing when to stop is a skill. It gets better with practice and worse with neglect. The checklist above is a starting point, not a religion. Adapt it. Argue about it with your team. Throw out the parts that don’t work.

Even imperfect criteria are better than none. You can always refine them. You can’t refine a gut feeling.

Now go finish that hunt you’ve been sitting on. You know the one.

Happy thrunting!